What Is the Biggest Problem in Project Management That Nobody Talks About?

If you ask ten project managers what the biggest problem in their field is, you get ten different answers. And almost all of them are wrong.

Not wrong as in "that's not a real problem." Wrong as in "that's a symptom, not the cause."

The Usual Suspects

"Bad communication." The classic. Teams don't talk to each other, information gets lost, misunderstandings happen. Sure. But why? Everyone has Slack, Teams, email, standups, retros, wikis. We have more communication channels than ever. The information is being communicated. It's just not arriving where it needs to be, when it needs to be there.

"Scope creep." Requirements keep growing, the project keeps expanding, nobody says stop. Real problem. But look closer: scope doesn't "creep." It changes in concrete moments. A customer email. A stakeholder call. A revised wireframe. The change happens. What doesn't happen is that the project plan, the budget, and the timeline get updated to reflect it.

"Wrong methodology." If only we were agile. If only we were less agile. If only we did SAFe. If only we stopped doing SAFe. Every few years the industry picks a new framework and promises it will fix everything. It never does. The methodology changes, the same projects still fail.

"Lack of resources." Not enough people, not enough budget, not enough time. Sometimes true. More often, the resources would be enough if they were allocated based on what's actually happening instead of what was planned three months ago.

"Poor leadership." The PM doesn't escalate. The steering committee doesn't decide. The sponsor is absent. Again, real. But ask yourself: on what basis would they escalate or decide? What information do they have? How old is it?

All of these are real. None of them are the root cause. They're all downstream of something else.

The Clean Desk

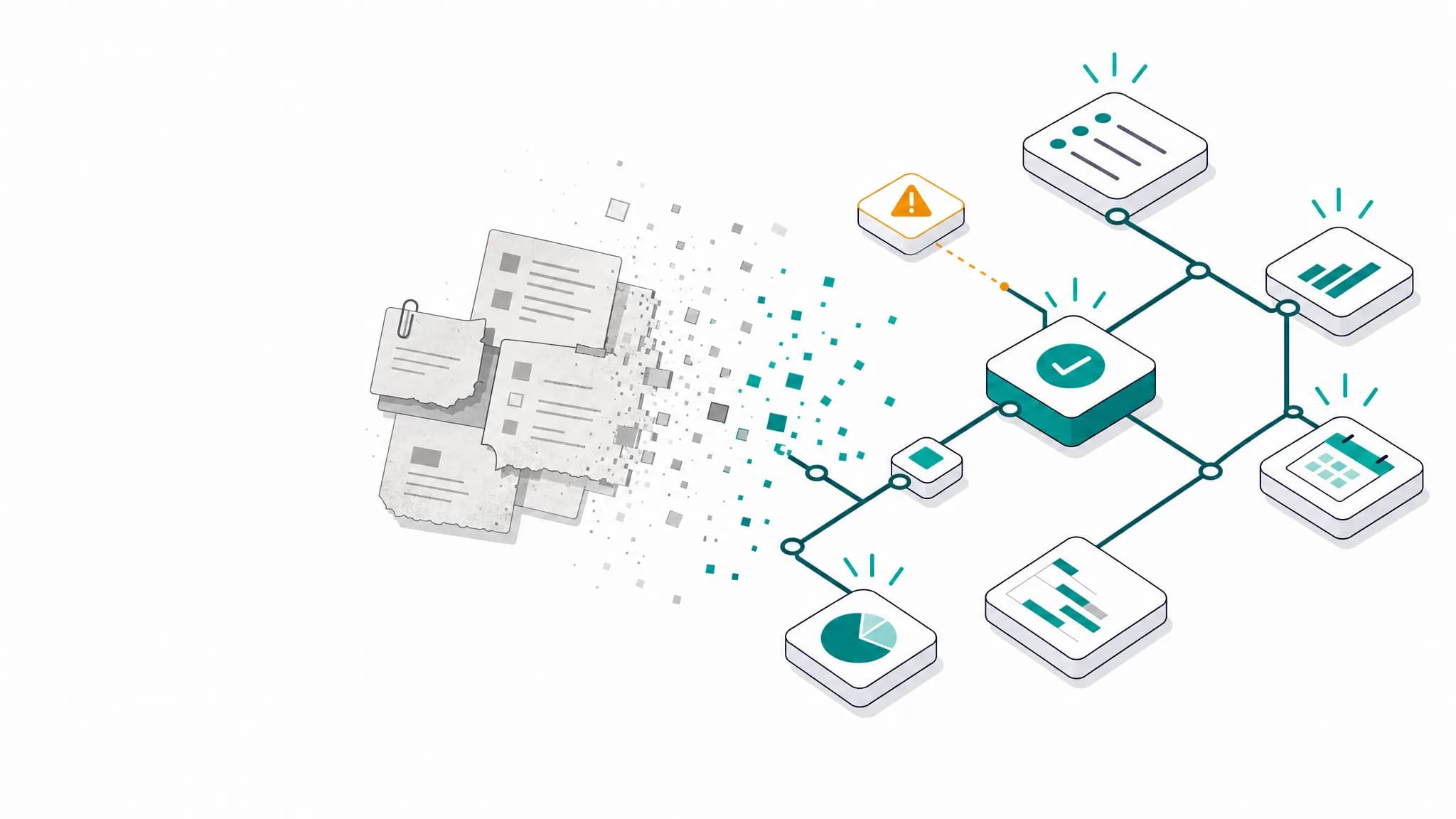

Picture the start of a project. Everything is in order. The project plan is current. The requirements document is signed off. The solution design matches the requirements. Reporting reflects reality. Every ticket in the backlog traces back to an actual requirement.

The project manager's desk is clean. The documents on it match what's actually happening.

This lasts about a week.

Then reality starts piling up. An email from the customer mentions a "small clarification" that actually changes the scope. A colleague says in a hallway conversation that the API team is two weeks behind. A stakeholder casually reprioritizes in a call nobody took notes on. The UX designer sends a revised wireframe that contradicts the signed-off requirements.

The desk gets messier. The project plan still says what it said last week. The requirements doc still has the old scope. The solution design still matches requirements that no longer exist.

For a while, the project manager holds it all together in their head. They know the real status. They know which documents are still accurate and which ones aren't. They mentally adjust when someone quotes the project plan. They remember the email, the hallway conversation, the call.

This works until the PM is sick for a day. Until they go on vacation. Until the project gets big enough that one person's memory can't bridge the gap anymore. Until a new team member reads the requirements document and takes it at face value.

The Real Answer

The biggest problem in project management is stale context. People making decisions based on information that was accurate at some point but isn't anymore.

Not wrong information. Not missing information. Outdated information. Information that was true when it was written down and has silently stopped being true since.

Every "bad decision" by a stakeholder, every "miscommunication" between teams, every "scope creep" that blindsided a project: trace it back far enough, and you find someone acting on context that had expired.

The reason nobody talks about it is that stale context doesn't look like a problem. It looks like a project plan. It looks like a requirements document. It looks like a risk register. It looks like valid, professional, well-structured project documentation. You can't tell by looking at it that it no longer matches reality.

Why All the Usual Suspects Are Symptoms

Go back to the list above. Every single one dissolves once you see it through this lens:

"Bad communication" is context that didn't propagate fast enough. The developer knew about the blocker. The information existed. It just didn't reach the PM before the status meeting.

"Scope creep" is a scope that changed while the baseline stayed frozen. The requirements moved, the document didn't. The gap between the two is stale context.

"Wrong methodology" is usually the right methodology running on outdated inputs. Agile with a two-week-old backlog is not agile. It's waterfall with standups.

"Lack of resources" is often enough resources allocated to last month's priorities. The world moved on, the resource plan didn't.

"Poor leadership" is leaders deciding on information they received too late, in summaries too compressed, from reports already stale when they were written.

Stale context is not a problem. It is the problem. Everything else is a symptom.

The Decay Rate

Not all information goes stale at the same speed.

Fast decay (hours to days):

- Task status and blockers

- Team availability

- Sprint progress

- Bug severity and priority

Medium decay (days to weeks):

- Risk assessments

- Resource allocations

- Timeline estimates

- Stakeholder priorities

Slow decay (weeks to months):

- Strategic goals

- Architecture decisions

- Budget allocations

- Team composition

Most project management practices treat all information as if it decays slowly. Quarterly reports. Monthly risk register updates. Sprint-level budget reviews.

The critical operational data, who's blocked, what changed, what broke, decays in hours. By the time it reaches a weekly status meeting, it's archaeology.

AI Has the Same Problem

AI in 2026 is impressive. It can research your project autonomously, draft meeting agendas, summarize weeks of conversations, generate status reports. The capabilities are real.

The problem is what it uses as input.

You ask your AI copilot to prepare a meeting agenda for tomorrow's steering committee. It goes through your project data, pulls open items, cross-references recent updates. The result looks professional. Clean structure, right topics, relevant context. You almost send it out.

Then you read the details. Half the items on the agenda were resolved three weeks ago. The "open risk" it flags was mitigated in a call two Mondays ago. The dependency it highlights as critical was de-scoped last sprint. The AI did exactly what you asked. It researched thoroughly and produced a polished result. It just used information from four weeks ago as its reference, and all of it has moved on since.

Or you ask for a project status summary. The AI reports that a new bug was filed last week and flags it as a current blocker. What the AI doesn't know: the developer already told you last Friday that it was a misconfiguration, not a bug. Took five minutes to fix. But that conversation happened in a hallway, not in a ticket. The AI only sees what's written down, and what's written down is stale.

The AI is doing its job. The context it's working from is just as outdated as the risk register that nobody updated. Same disease, different patient.

AI in project management keeps disappointing, and the models aren't the reason. The context is. Everyone can tell the result is useless. Nobody can tell why. In 2024, the problem was obvious: you had to manually export data, paste it into a chat window, hope you picked the right files. Today's AI agents are past that. They have agentic capabilities. They can search your project data autonomously, pull tickets, read documents, cross-reference conversations. They often find exactly the right context.

The right context, not the fresh context.

The AI correctly identifies the requirements document as relevant. That document just hasn't been updated since the customer call last Tuesday. The AI correctly pulls the open bug tickets. One of those bugs was resolved in a hallway conversation that never made it back into the system. The AI does the right research. The research leads to the right documents. The documents contain the wrong information.

This is harder to catch than the old copy-paste problem. When you manually fed the AI, you at least had a moment of "wait, is this still current?" Now the AI finds the sources on its own, and the result looks so thoroughly researched that you trust it. The stale context is buried under a layer of competent execution.

The current industry response is to connect more sources. More integrations. More data pipelines. Three MCP servers, five API connections, real-time sync with every tool in the stack. But connecting more sources doesn't solve the problem if half the data in those sources is already outdated. You're just giving the AI faster access to stale information. External sources are signals, not truths. Treating them as ground truth is how you automate bad decisions at scale.

The shift that actually matters is not giving AI access to more data. It already has plenty. The shift is using AI to keep context fresh in the first place.

Instead of letting the AI loose on ten outdated sources to produce a polished but stale report, flip it around. Use AI as a filter. A daily context updater.

The workflow looks like this: A new customer email comes in. The AI reads it and determines whether it's relevant to the project context. If it is, it identifies what needs to change. The requirements scope? A risk assessment? A timeline assumption? The AI drafts a concrete update proposal. The project manager reviews it, approves or adjusts, and the project context is fresh again. One email processed, one update applied, thirty seconds of human attention.

Now multiply that across every input channel. Slack messages, call notes, ticket comments, meeting transcripts. Each one gets filtered, assessed for relevance, and turned into a concrete context update that a human approves. The AI doesn't decide what's true. The PM does. The AI just makes sure nothing falls through the cracks.

Do this daily, and two things happen. Every stakeholder has access to a project context that reflects this morning's reality. And when you then ask AI to help prepare a meeting, write a status report, or assess a risk, it's working from a context that is already clean and current. No more researching across stale documents. The distilled context is the source of truth.

This is the approach we're building with TensorPM. AI as a context filter, not a context consumer. Human in the loop for every update. The PM stays in control. The context stays alive.

The Half-Life of a Decision

Every decision has a half-life: the time after which there's a 50% chance the decision is no longer optimal, because the underlying context has changed.

For operational decisions (task assignments, daily priorities): hours. For tactical decisions (sprint goals, resource allocation): days. For strategic decisions (roadmap, architecture): weeks to months.

If you're not revisiting decisions faster than their half-life, you're accumulating stale context debt. Like technical debt, it compounds silently until something breaks.

Your project has stale context right now. The question is: how fast are you detecting and correcting it?

What Keeps Context Alive

The obvious answer is "update your documents more often." That's like telling someone with a leaking roof to mop faster.

The real question is: why does context decay? Not because people are lazy. Because the cost of updating is too high. Every time something changes, someone has to recognize the change is relevant, figure out which documents it affects, open each one, find the right section, update it, and notify everyone who needs to know. For one change. Multiply that by twenty changes a week.

Nobody does this. Not because they don't care, but because the friction of updating exceeds the perceived benefit. It builds up silently until the accumulated staleness causes a crisis.

The solution isn't discipline. It's reducing the cost of an update to near zero.

That requires a different approach. Instead of asking humans to manually propagate every change across every document, you feed raw inputs (emails, meeting notes, revised specs) into a system that detects what changed relative to the current project context and proposes specific, atomic updates. The human reviews and approves. Thirty seconds instead of thirty minutes.

Most project tools are designed to store and display information. They're optimized for organizing what you put in. They have no mechanism for detecting that what you put in last month no longer matches reality.

A system that keeps context alive needs to do the opposite: continuously compare incoming signals against the current state and surface the delta. Not more dashboards. Not more integrations. A feedback loop between the real world and the project model, with a human as the final checkpoint.

This creates a loop. AI checks incoming information for relevance and proposes context updates. The project manager confirms or rejects. No more hunting through emails and documents yourself. And because the context stays current, the AI gets better too. It stops working from stale snapshots and starts reasoning on what's actually true right now. Better context makes better AI. Better AI makes fresher context.

Context Freshness Audit

This takes ten minutes and no new tools:

- List your key decision documents: project plan, risk register, budget, requirements, stakeholder matrix.

- For each document, note when it was last updated.

- For each document, note when it was last read by a decision-maker.

- Calculate the gap.

If your risk register was updated 3 weeks ago and your CEO last read it 5 weeks ago, there's a 5-week gap between reality and the decisions being made. Five weeks of stale context.

Multiply that across every document, every stakeholder, every decision. That's your project's stale context debt.

Most teams who run this exercise are shocked. Gaps of weeks or months are common, even in "agile" organizations that pride themselves on fast feedback loops.

Conclusion

We spend enormous energy on methodologies, tools, and processes. Agile, Waterfall, SAFe, Kanban. They all promise to fix project management. They all help, to a degree.

None of them directly address the root cause: the relentless decay of context.

The teams that consistently deliver are the ones with the freshest context. They know what's true right now, not what was true last week. They make decisions on live data, not on archaeological artifacts dressed up as status reports.

AI won't fix this either, unless it's connected to live context instead of working from stale snapshots.

The most valuable thing you can do for your project is reducing the time between "something changed" and "everyone who needs to know, knows." Everything else follows from that.

Read next: What Is Agentic Project Management? Definition, Framework & Tools (2026 Guide) — stale context isn't just a project management problem; it's the failure mode that breaks AI agents at machine speed. The pillar piece explains how a living project graph turns the freshness loop into an operating model.

About the Author

Simon Schwer is a Project Manager with nearly a decade of experience from international projects. After watching too many good teams fail on stale information, he started developing Context-Driven Project Management (CDPM), a framework that puts living context at the center of every project decision.

Corrections

No corrections to date.